FOTechHub Annual Conference (Nov 18-19, 2025) Panel: AI in Action: From Hype to High-Impact Results

The second panel cut through the hype. Three SFOs—each with different scale, tech stacks, and risk profiles—revealed how they’re deploying AI today, which horses they’re betting on, and which problems actually justify AI investment.

Joe Donovan (CYMI Holdings)

Sharon Li (Spindrift Management)

Daniel Amberger (Single Family Office)

Moderated by Dr. Tania Nield.

This discussion is available on our podcast (full version on members podcast) and in our conference replays.

1. The Platform Race: Copilot vs. ChatGPT

Dan’s global SFO is running both engines because neither is winning consistently.

Copilot gives native access to Microsoft data (SharePoint, OneDrive, the works).

ChatGPT continues to lead on reasoning and innovation.

Then Copilot pulled GPT-5 into its stack—and suddenly the gap shrank again.

Everything is enterprise-grade; nothing trains on FO data. Security posture: aggressive.

The long-term winner? Still undecided. The race resets every quarter.

2. The Point-Solution Purist

Sharon ignored the platform debate entirely and went straight for the operational bottleneck: capital statements, K-1s, trust allocations, and Archway ingestion.

Canoe and Arch handled ~50% of the workflow.

Half-measures weren’t acceptable.

She partnered with Mantle—trusted team, right timing—and built an end-to-end system that retrieves, reads, allocates, and pushes everything directly into Archway.

Prototype: 6 months.

Refinement: 12 months.

Outcome: a full stack solution, not an add-on.

3. The Hybrid Operator

Joe, also on Archway, came to the same document problem but took a different route: adopt a point tool, then extend it with general AI.

His office selected Arch for alt-data ingestion—clear ROI and emerging data leverage—while simultaneously rolling out ChatGPT + Copilot to everyone. A bottom-up model: powered by curiosity, not mandates.

Teams now:

- build internal workflows,

- generate code to bypass Archway reporting gaps,

- automate document handling via Power Automate,

- standardize investment DD processing.

4. What the Audience Is Actually Doing

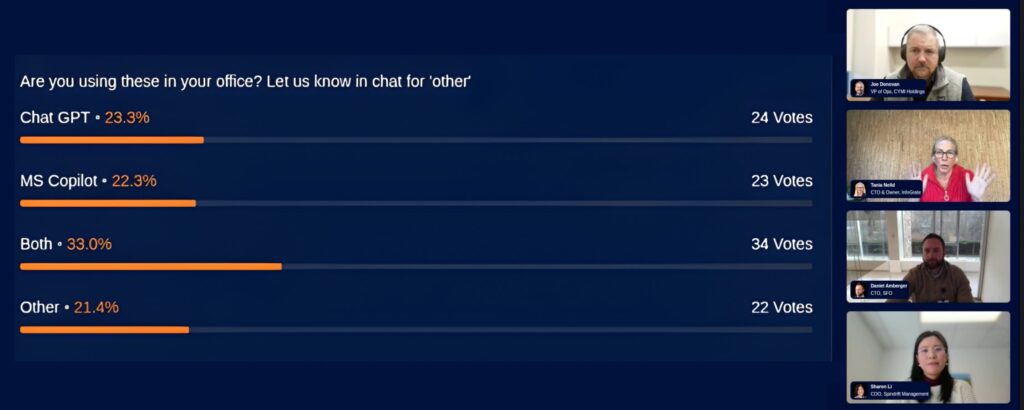

A live audience poll reinforced what the panelists were describing in practice.

- 33% of attendees reported running both Copilot and ChatGPT in parallel

- among those choosing a single tool, usage was split almost evenly between Copilot and ChatGPT

- ~20% reported not using either platform yet

The takeaway: most family offices aren’t committing to a single “winner.” They’re hedging—testing both, learning both, and delaying hard platform decisions until the value is clearer.

5. Selecting AI Projects: The ROI Filter

Dan puts every idea through two screens:

1. Is the problem big enough?

2. Is AI the right weapon?

If the economics don’t land, the idea doesn’t move. High-priority domains:

- contracting (legal costs),

- bank recs (pattern matching),

- payables

- internal agents that replace vendor sprawl.

6. ROI: The Most Elusive Metric

Everyone agrees on one thing: general-purpose AI ROI is hard to pin down.

How do you measure faster emails, cleaner summaries, or “thinking partner” value?

Most teams use usage as a proxy.

For point solutions, ROI is clearer: hours saved on capital statements, fewer external legal hours, reduced document ingestion labor, faster reconciliations and reporting.

Bottom line:

AI that automates defined workflows delivers measurable ROI.

AI that enhances thinking is harder to quantify—but still valuable.

7. Security: The Hard Line

Dan’s office is “borderline paranoid” (the FO won’t even be named publicly), and it shows.

Their rules:

- only enterprise versions of Copilot and ChatGPT

- zero tolerance for models that learn on FO data

This is the most conservative posture on the panel and—a key theme—one that influences everything, including rollout speed.

8. The Last Mile: Customizing Workflows

Joe: Uses AI to generate clean, repeatable code-driven transformations, not just ad hoc prompts. They’re stitching data from Archway into new standardized outputs—AI-generated programming instead of AI-generated text.

Sharon: Built the last mile into the solution—Mantle handles the allocations natively.

Dan: Evaluates whether internal teams can build that “last mile” via agents on top of ChatGPT/Copilot rather than buying a new tool.

Three different philosophies. Same outcome: eliminate manual grind.

9. The Training Divide

Sharon won’t roll out LLMs until the team is trained, aligned, and governed. She’s evaluating in-person courses, structured workshops, and sector-specific programs.

Dan is moving toward mandatory, programmatic, platform-agnostic training—focusing on prompting fundamentals. Internal bandwidth wasn’t enough; outsourcing is now on the table.

Joe uses LinkedIn Learning, Microsoft Learning, and peer-driven exploration. Modular, low-lift training that fits into real workloads.

Different models, same goal: raise the floor before pushing for advanced capability.

10. Culture: Efficiency vs. Job Security

Not every office admits it, but this panel did: AI can be a cost-cutting lever.

Some offices embrace that. Others frame AI as “empowerment, not reduction.”

The panel didn’t settle the debate, but they surfaced the tension that every SFO is quietly navigating.

THE TAKEAWAY

Three SFOs. Three contrasting AI philosophies.

- Dan → Dual-platform, enterprise-grade, ROI-driven.

- Sharon → Deep partnership, one major workflow solved completely.

- Joe → Hybrid ecosystem: point tool + LLM + automation + code generation.

No silver bullets—just informed choices.